-

Alexa_Demo_Video

A conversational UI chatbot that helps you through the long sleepless nights

Context

About 40 million people in the United States suffer from chronic long-term sleep disorders each year and an additional 20 million people experience occasional sleep

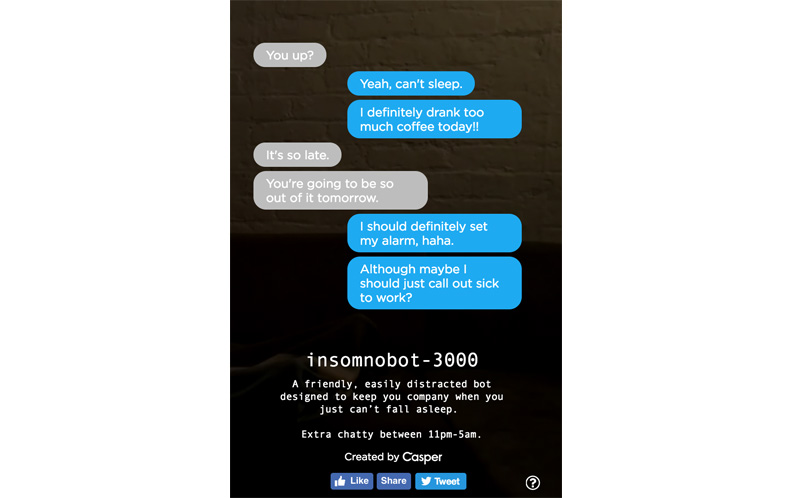

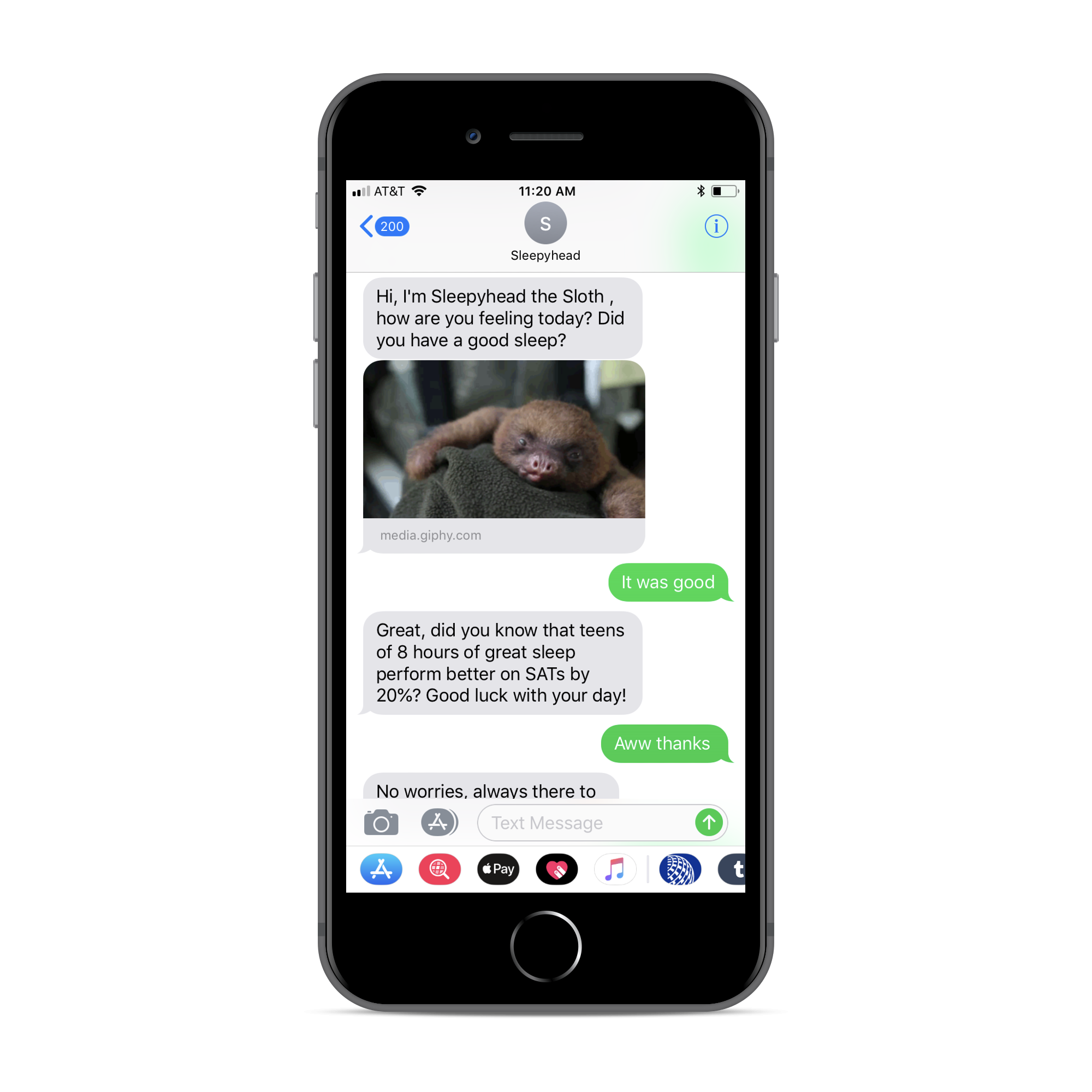

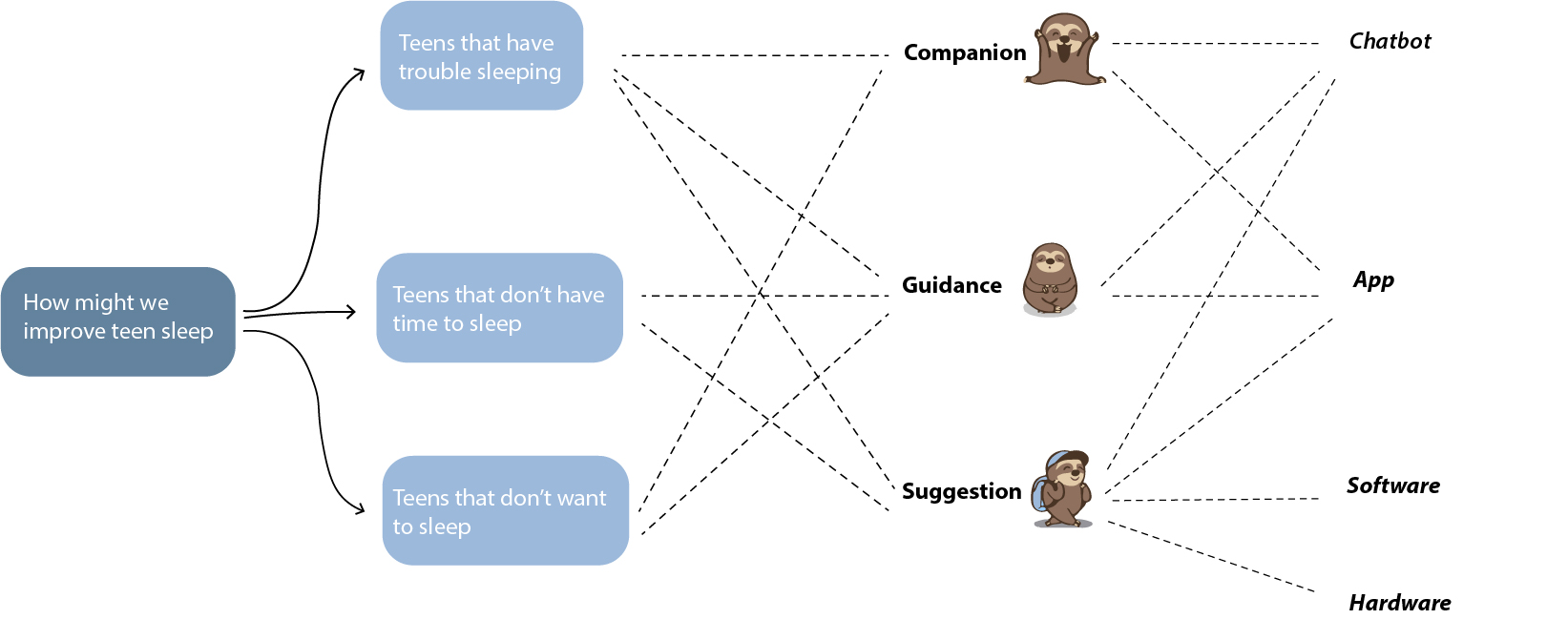

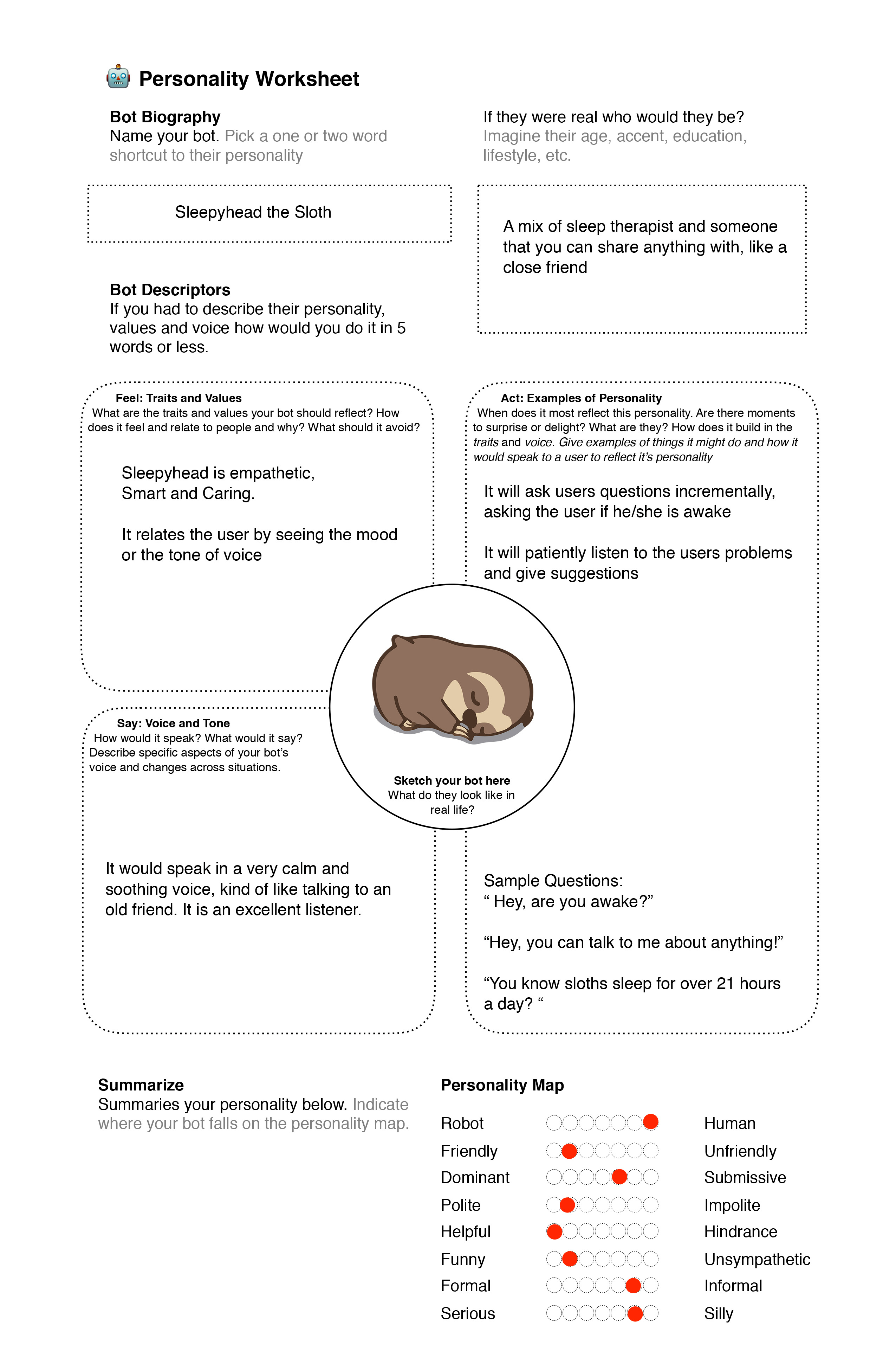

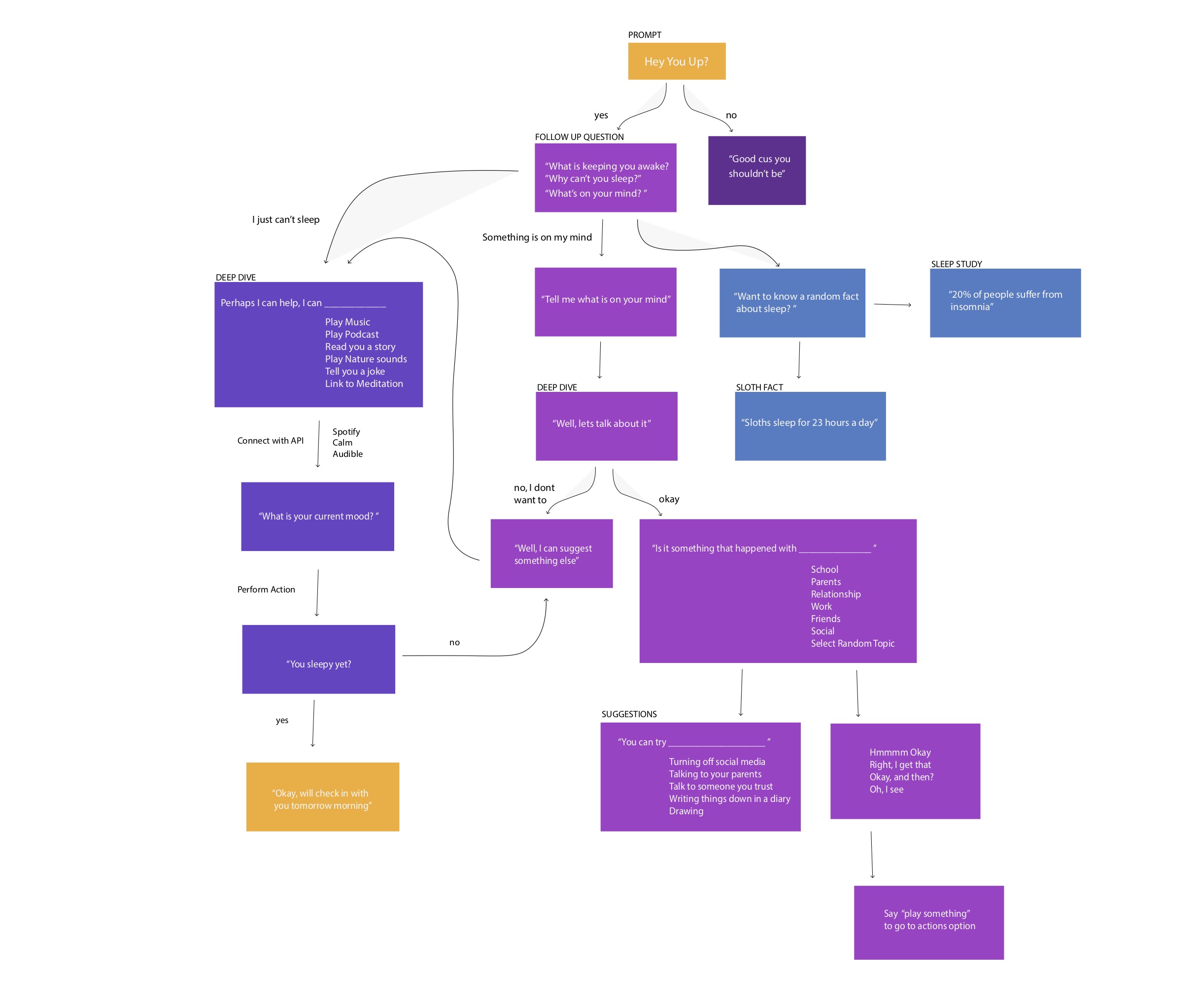

Inspired by [CallFrank] and chatbots like [insomnobot3000] by Casper Mattress, Sleepyhead provides a new way to tackle insomnia, a friend by your side to make it through the long sleepless nights. However, Casper is just a SMS bot, which led me to think something like Google Assistant or Alexa would be more appropriate, someone you can directly talk to. Below is an example of an interaction with the Casper bot:

1. Identifying the Problem

It has come to my attention that more and more people are suffering from insomnia and issues sleeping due to a variety of factors. This has led to a pandemic rise in feelings of loneliness and isolation, especially among young adolescents. I thought that perhaps there is a way to use Conversational UI to create a sleep companion that will talk to you and suggest music late at night. I chose to work with Alexa as it avoids the user's exposure to blue light at night. Hence what if there can be a bot that you can talk to during the hours of the night, but then will send you text updates throughout the day?

3. User Testing

Sample conversation testing to refine end conversation

George [12:52 PM] Hey, you up?

Sleepyhead [12:53 PM] Yup, always. Are you ?

George [12:53 PM] Yeah, I can't sleep

Sleepyhead [12:53 PM] What's keeping you awake?

George [12:53 PM] ALL OF THESE THOUGHTS RACING THROUGH MY BRAIN ITS TRUEEEE

Sleepyhead [12:53 PM] Hmmmm, do you want to talk about it ?

George [12:54 PM] I want to go to sleep

Sleepyhead [12:54 PM] Well, usually talking about it helps

George [12:54 PM] bark

Sleepyhead [12:54 PM] I dont quite understand, do you want to be entertained or do you want to talk about it

George [12:54 PM] I keep being haunted by dreams of a giant dog and I can't sleep without fear of the dog

Sleepyhead [12:55 PM] Well lets dig deeper

George [12:55 PM] dogs dig.... im scared

Sleepyhead [12:55 PM] Maybe it is something from your childhood?

George [12:56 PM] No.but PBS kids was a big part of my childhood the dog is always red and big

Sleepyhead [12:56 PM] I am scared of big dogs too

George [12:56 PM]awwww

Sleepyhead [12:57 PM] clifford was scary

George [12:57 PM] DONT SAY HIS NAME

Sleepyhead [12:57 PM] whoever thought of making a red dog is crazy

George [12:57 PM] _-

Sleepyhead [12:57 PM] sorry would you like me to do something to distarct you? I can sing you a song or play some calming music or a tedx podcast might help alleviate your fear

George [12:58 PM] I would like to not think about the red thing a song would be nice

Sleepyhead [12:58 PM] alright then, I will sing you a lullaby

George [12:58 PM] i am ready

Sleepyhead [12:58 PM] ba ba black sheep

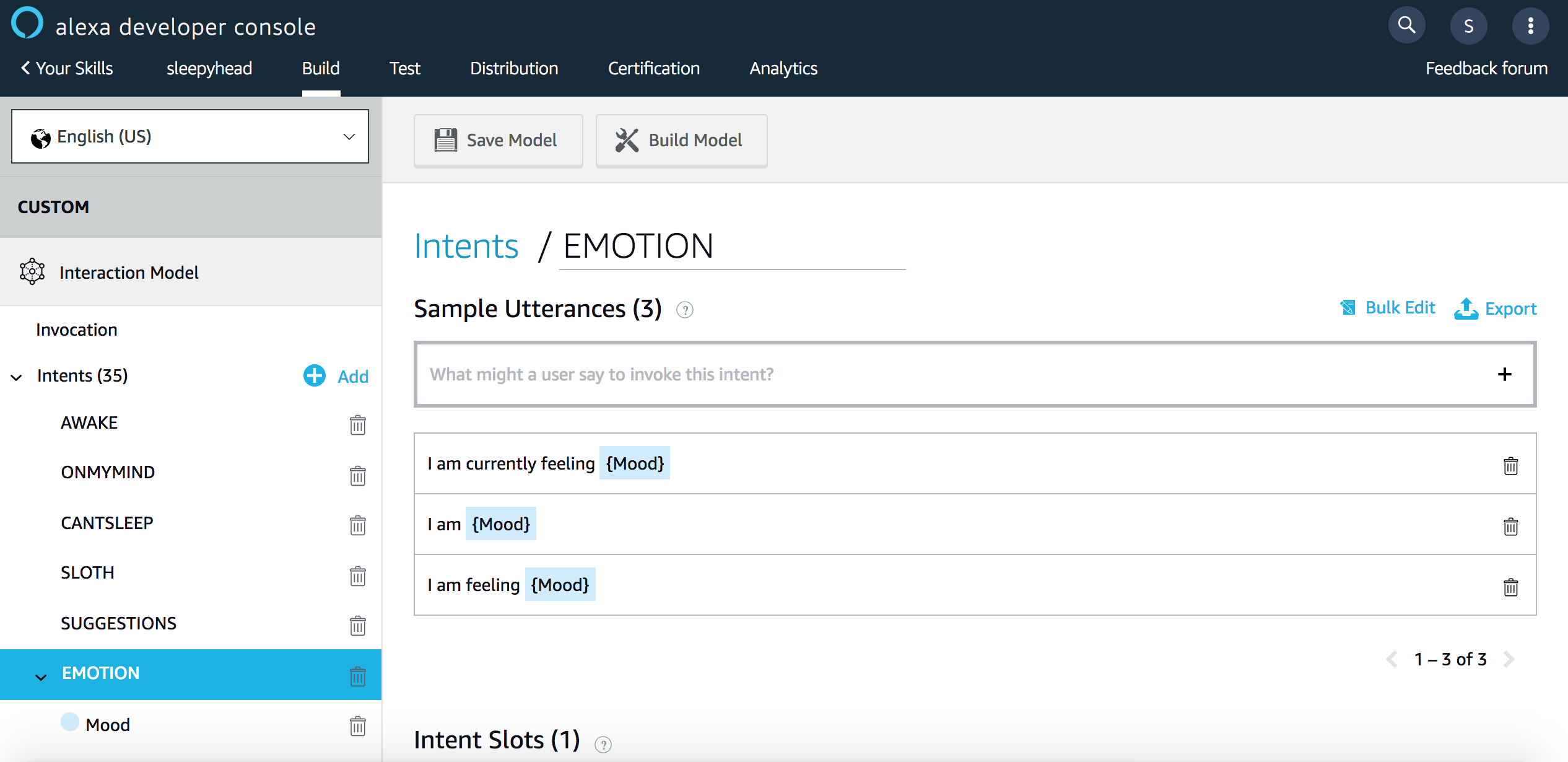

1. Designing the Conversation

In Alexa, the conversation is designed through intents on the Alexa Developer Console. Similar to Dialogue flow for the Google Assistant, you can set designated intents that can be triggered by different user utterances. The challenge here is that you can to be very specific when designing the conversation, and have to project that the user might say and how they may say it. Then on the server side design the different intents to trigger different speech responses. These intents are connected by making a HTTP request to the Alexa webhook '/incoming/alexa'. Below is a section of the sample code used in the Ruby Sinatra framework. [app.rb]

on_intent("HEY")do

response.should_end_session = false

response.set_output_speech_text("Hi! I am awake, I have missed you. How was your day?")

end

on_intent("SLOTH") do

response.should_end_session = false

response.set_output_speech_text("Hi! I am Sleepyhead the Sloth, your sleep companion. I am here to keep you company during the long nights. Say I am awake to see how I can help you")

end

#Intent responding to user being awake

on_intent("AWAKE") do

response.set_output_speech_text("Oh! What's keeping you awake?")

logger.info 'Here processed'

response.should_end_session = false

end

#Intent responding something on the user's mind

on_intent("ONMYMIND") do

response.set_output_speech_text("Tell me what's on your mind or I can tell you a random fact about sleep")

logger.info 'Here processed'

response.should_end_session = false

end2. Storing Session_Attributes

I soon realized that to have a productive and natural conversation it is important to remember what the user might have said in previous responses and to have followup questions if needed. Since I did not store the user's information in a database, I chose to store the session using the special Alexa session_attributes to store the specific information in the slots. Slots are variables in a general utterance that can change. For example, the [Mood] slot might contain a variety of different emotions such as [happy, sad, mellow, chill, angry]. The information in the slots can then be stored to invoke some sort of action or be used in future conversations.

on_intent("EMOTION")do

#storing mood

slots = request.intent.slots

puts slots.to_s

#Saving user sentiment

mood = request.intent.slots["Mood"].to_s

response.should_end_session = false

# search_track(mood)

#set session storage

session_attributes["mood"] = mood

# response.set_output_speech_text("Ok, I just made you a personalized mood playlist")

response.set_output_speech_text("Ok, what would you like me to do?")

end

on_intent("MOOD_MUSIC")do

#Code for Spotify grabbing track sample by playlist

if session_attributes["mood"].nil?

# do default

response.set_output_speech_text("I am sorry I didn't quite get that, but what are you in the mood for?")

else

mood = session_attributes["mood"]

search_track(mood)

response.set_output_speech_text("Ok, I just made you a personalized mood playlist")

end

end#Get a random sample of a Spotify playlist depending on mood input

def search_track trackname

auth = ""

File.open("spotify-auth.txt", "r") do |file|

file.each { |line| auth += line }

end

user_hash = JSON.parse( auth )

RSpotify.authenticate(ENV["SPOTIFY_CLIENT_ID"], ENV["SPOTIFY_CLIENT_SECRET"])

#spotify_user = RSpotify::User.new(@@spotify_auth)

puts user_hash

spotify_user = RSpotify::User.new( user_hash )

# Access private data

puts spotify_user.country #=> "US"

puts spotify_user.email #=> "example@email.com"

playlist = spotify_user.create_playlist!('mood-music') # automatically refreshes token

puts playlist

tracks = RSpotify::Track.search(trackname.to_s)

playlist.add_tracks!(tracks)

playlist.tracks.first.name #=> "Somebody That I Used To Know"

#First song in playlist

tracks.first

endChallenges

Throughout the process, I faced many technical and non-technical challenges that I had to overcome.

Technical Challenges

A major technical challenge I faced was working with the rigid format of the Alexa. Alexa only gives very specific types of responses, such as <text> or <ssml>, in which case I am restricted in the response format. Another difficulty was tackling with the Natural Language Processing aspect of the conversation. As with different users, sometimes it is extremely difficult what responses will be given and currently, the training aspect of the utterances is still quite rigid and limited.

Non-Technical Challenges

I struggled with having the right user responses and catering to different needs. Perhaps with more extensive user research, I will be able to modify and refine the conversation to be more empathetic.

Takeaways

Overall, this was a very rewarding exercise that allowed me to gain a deeper understanding of how conversation UI worked and what potentials it has in improving people's lives. It has also exposed to greater ethical issues dealing with the collection of data and privacy issues. I would love to see where more user research and the incorporation of a database would take the project.

Share this Project

Focused on

Skills

Tools

About

A conversational UI chatbot that helps you through the long sleepless nights

Created

October 19th, 2018